Category: tutorial

-

A Data Science Exploration From the Titanic in R

Step aboard the Titanic dataset: Explore, feature-engineer, and model your way to survival predictions with style.

-

Hadoop Tutorial Series, Issue #4: To Use Or Not To Use A Combiner

Explains when Hadoop Combiners help (or hurt) performance and correctness, with code‑level guidance.

-

Hadoop Tutorial Series, Issue #3: Counters In Action

Shows how to instrument MapReduce jobs with Hadoop Counters to track custom metrics during large‑scale processing.

-

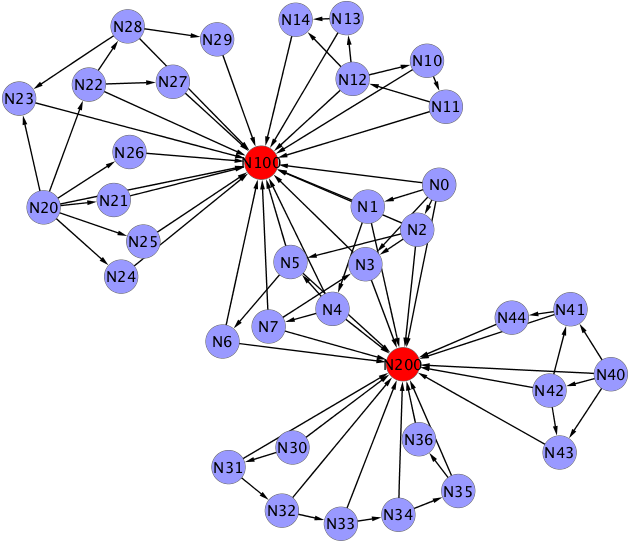

Hadoop Tutorial Series, Issue #2: Getting Started With (Customized) Partitioning

Teaches key partitioning patterns (e.g., partial sorts to specific reducers) to control data flow in MapReduce jobs.

-

Hadoop Tutorial Series, Issue #1: Setting Up Your MapReduce Learning Playground

Step‑by‑step setup of a Cloudera VM + Maven project so you can quickly experiment with Hadoop wordcount and beyond.

-

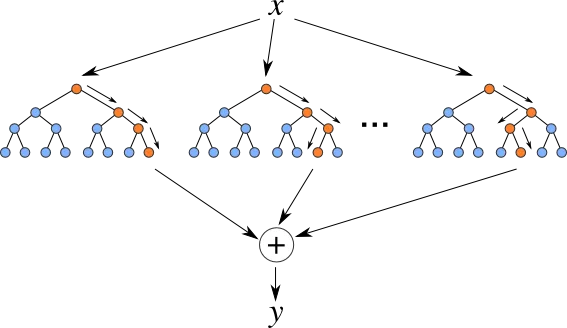

Flexible Collaborative Filtering In JAVA With Mahout Taste

Rapid prototyping approach to a recommendation engine using Mahout Taste’s pluggable similarity and scoring components.

-

Writing A Token N-Grams Analyzer In Few Lines Of Code Using Lucene

Leverages Lucene analyzers to emit token n‑grams for downstream text mining or search tasks with minimal Java.

-

Drawing A Zipf Law Using Gnuplot, Java and Moby-Dick

Let’s use the Moby‑Dick text to generate word frequency plots and illustrate Zipf’s law programmatically.

-

Flexible Java Profiling And Monitoring Using The Netbeans Profiler

We’ll demonstrate NetBeans’ built‑in profiler for low‑friction CPU/memory profiling across Java applications.

-

BeanShell Tutorial: Quick Start On Invoking Your Own Or External Java Code From The Shell

BeanShell is a lightweight scripting language that’s compatible with the Java language. It provides a dynamic environment for executing Java code in its standard syntax but also allow common scripting conveniences such as loose types, commands, and method closures like those in Perl and JavaScript. It is considered so useful that it should became part…